Image credit: Radiological Society of North America (RSNA)

News • Influence in diagnostic decisions

Too much trust in AI? X-ray boxes may lead radiologists astray

When making diagnostic decisions, radiologists and other physicians may rely too much on artificial intelligence (AI) when it points out a specific area of interest in an X-ray.

Image source: RSNA

This is according to a new study published in Radiology, a journal of the Radiological Society of North America (RSNA).

“As of 2022, 190 radiology AI software programs were approved by the U.S. Food and Drug Administration,” said one of the study’s senior authors, Paul H. Yi, M.D., director of intelligent imaging informatics and associate member in the Department of Radiology at St. Jude Children’s Research Hospital in Memphis, Tennessee. “However, a gap between AI proof-of-concept and its real-world clinical use has emerged. To bridge this gap, fostering appropriate trust in AI advice is paramount.”

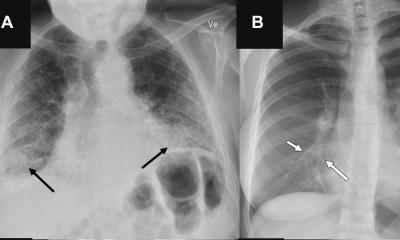

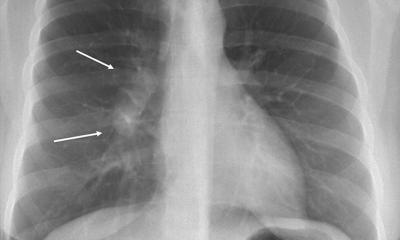

In the multi-site, prospective study, 220 radiologists and internal medicine/emergency medicine physicians (132 radiologists) read chest X-rays alongside AI advice. Each physician was tasked with evaluating eight chest X-ray cases alongside suggestions from a simulated AI assistant with diagnostic performance comparable to that of experts in the field. The clinical vignettes offered frontal and, if available, corresponding lateral chest X-ray images obtained from Beth Israel Deaconess Hospital in Boston via the open-source MIMI Chest X-Ray Database. A panel of radiologists selected the set of cases that simulated real-world clinical practice.

I think as radiologists using AI, we need to be aware of these pitfalls and stay mindful of our diagnostic patterns and training

Paul H. Yi

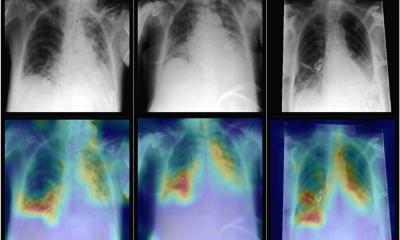

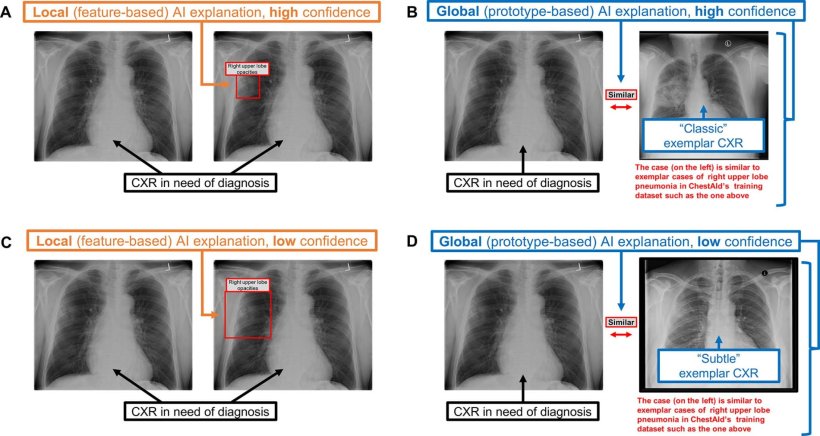

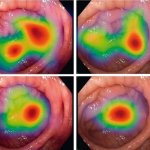

For each case, participants were presented with the patient’s clinical history, the AI advice and X-ray images. AI provided either a correct or incorrect diagnosis with local or global explanations. In a local explanation, AI highlights parts of the image deemed most important. For global explanations, AI provides similar images from previous cases to show how it arrived at its diagnosis. “These local explanations directly guide the physician to the area of concern in real-time,” Dr. Yi said. “In our study, the AI literally put a box around areas of pneumonia or other abnormalities.”

The reviewers could accept, modify or reject the AI suggestions. They were also asked to report their confidence level in the findings and impressions and to rank the usefulness of the AI advice. Using mixed-effects models, study co-first authors Drew Prinster, M.S., and Amama Mahmood, M.S., computer science Ph.D. students at Johns Hopkins University in Baltimore, led the researchers in analyzing the effects of the experimental variables on diagnostic accuracy, efficiency, physician perception of AI usefulness, and “simple trust” (how quickly a user agreed or disagreed with AI advice). The researchers controlled for factors like user demographics and professional experience.

The results showed that reviewers were more likely to align their diagnostic decision with AI advice and underwent a shorter period of consideration when AI provided local explanations. “Compared with global AI explanations, local explanations yielded better physician diagnostic accuracy when the AI advice was correct,” Dr. Yi said. “They also increased diagnostic efficiency overall by reducing the time spent considering AI advice.”

Recommended article

Article • Diagnostic assistant systems

AI in endoscopy: helper, trainer – influencer?

Artificial intelligence (AI) is increasing its foothold in endoscopy. Although the algorithms often detect pathologies faster than humans, their use also generates new problems. PD Dr Alexander Hann from the University Hospital Würzburg points out that the use of AI helpers can affect not only the reporting of findings – but also the person making the findings.

When the AI advice was correct, the average diagnostic accuracy among reviewers was 92.8% with local explanations and 85.3% with global explanations. When AI advice was incorrect, physician accuracy was 23.6% with local and 26.1% with global explanations. “When provided local explanations, both radiologists and non-radiologists in the study tended to trust the AI diagnosis more quickly, regardless of the accuracy of AI advice,” Dr. Yi said.

Study co-senior author, Chien-Ming Huang, Ph.D., John C. Malone Assistant Professor in the Department of Computer Science at Johns Hopkins University, pointed out that this trust in AI could be a double-edged sword because it risks over-reliance or automation bias. “When we rely too much on whatever the computer tells us, that’s a problem, because AI is not always right,” Dr. Yi said. “I think as radiologists using AI, we need to be aware of these pitfalls and stay mindful of our diagnostic patterns and training.”

Based on the study, Dr. Yi said AI system developers should carefully consider how different forms of AI explanation might impact reliance on AI advice. “I really think collaboration between industry and health care researchers is key,” he said. “I hope this paper starts a dialog and fruitful future research collaborations.”

Source: Radiological Society of North America

20.11.2024