Image source: Adobe Stock/alexey_boldin

Article • Possibilities and risks

AI in cardiology: so much is feasible – but is everything useful?

It might sound like science fiction but it is reality in cardiology: with the help of artificial intelligence (AI) physicians can recognize from a patient’s headshot whether the person is suffering from coronary artery disease and is therefore at risk of myocardial infarction. But is that knowledge really useful? Professor Dr David Duncker calls for a differentiated and careful assessment of the possibilities and risks associated with AI. He thus recommends the careful selection of the target group in order to recognize at-risk patients early.

Report: Sonja Buske

AI, he says, can help analyse larger data volumes but he cautions that ‘An algorithm is always only as good as the person who trained it. Biases and wrong decisions cannot be avoided. This ethical dimension has to be taken into consideration by all means.

Photo: supplied

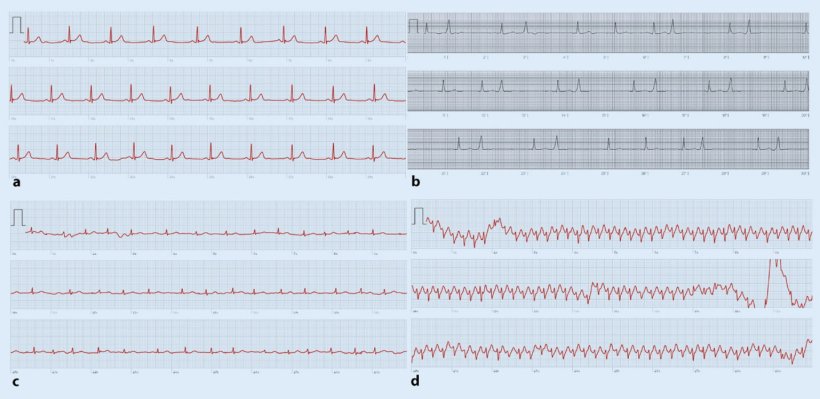

‘Atrial fibrillation is the most frequent type of arrhythmia,’ says Professor Duncker, Head of Rhythmology and Electrophysiology at the Department of Cardiology and Angiology at Hannover Medical School, and adds that “it often causes sequelae such as strokes that can be avoided with early prophylaxis.’ He does acknowledge though the potential of AI, particularly when human resources are scarce and patient numbers increase. Algorithms can pre-scan huge data volumes in the background so the physicians have to deal only with suspicious findings. But proper selection of the target group is crucial. ‘Today, we can record ECGs with smartwatches and specifically look for arrhythmia,’ Professor Duncker explains and adds that ‘the users of such smartwatches however, are usually much younger than the group at risk for arrhythmia. Therefore we have to make sure the algorithms are applied to the correct target population.’

© German Cardiac Society

Screening recommendations for arrhythmia

Recently, the European Heart Rhythm Association (EHRA) issued new recommendations, co-authored by Professor Duncker, regarding the use of digital devices for arrhythmia screening. Systematic screening is recommended for persons over 75 years and those above 65 years who are at risk. Persons over 75 years without risk factors and those younger than 65 years with risk factors should be screened during their next doctor’s appointment. Germany is facing the problem that there are too many people in that age bracket, Professor Duncker points out: ‘The physicians simply don’t have the resources to review all ECGs. AI would have to preselect a smaller group that needs attention. Specialized apps on the smartphone would be a good alternative to smartwatches for that target group.’

Therapy differences caused by incorrectly trained AI

Professor Duncker, however, underlines that AI does not necessarily make better decisions than human beings: ‘Humans make mistakes and are biased. Studies have shown that not every patient receives equal treatment. Factors such as gender, ethnic background or religion play a role. When biases enter AI unchecked, differences in treatment will result which, in the worst case, may harm the patient. This is something we have to be aware of. We have to try to avoid training the algorithms incorrectly.’

Recommended article

Article • Experts point out lack of diverse data

AI in skin cancer detection: darker skin, inferior results?

Does artificial intelligence (AI) need more diversity? This aspect is brought up by experts in the context of AI systems to diagnose skin cancer. Their concern: images used to train such programs do not include data on a wide range of skin colours, leading to inferior results when diagnosing non-white patients.

Personalised therapies with the help of AI

In a few years, the cardiologist hopes, AI will be able to design personalised therapies, e.g. by calculating the effects of medication and optimally planning surgical interventions. But Professor Duncker warns that ‘we should not lower our scientific standards. New pharmaceuticals that are being tested must not be administered to each and every patient. We have to continue scientific studies. These standards also have to apply to AI and randomised studies have to be conducted to assess the effects.’

Profile:

Professor Dr David Duncker is Director of the Hannover Heart Rhythm Centre and Managing Senior Consultant and Head of Rhythmology and Electrophysiology at the Department of Cardiology and Angiology at Hannover Medical School. He is a member of the EHRA board and chair of the EHRA committee for digital communication.

26.08.2022