Image source: Gu et al., PNAS 2022 (CC BY-NC-ND 4.0)

News • Combining data science and machine learning

MRI image reconstruction: the next step for compressed sensing

University of Minnesota scientists and engineers have found a way to improve the performance of traditional Magnetic Resonance Imaging (MRI) reconstruction techniques, allowing for faster MRIs to improve healthcare.

“MRIs take a long time because you’re acquiring the data in a sequential manner,” explained Mehmet Akçakaya, an associate professor in the U of M College of Science and Engineering and senior author of the paper. “We want to make MRIs faster so that patients are there for shorter times and so that we can increase the efficiency in the healthcare system.”

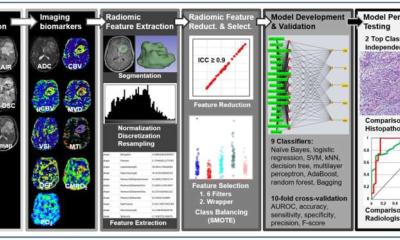

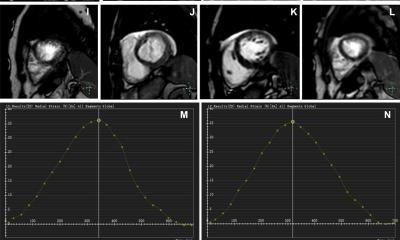

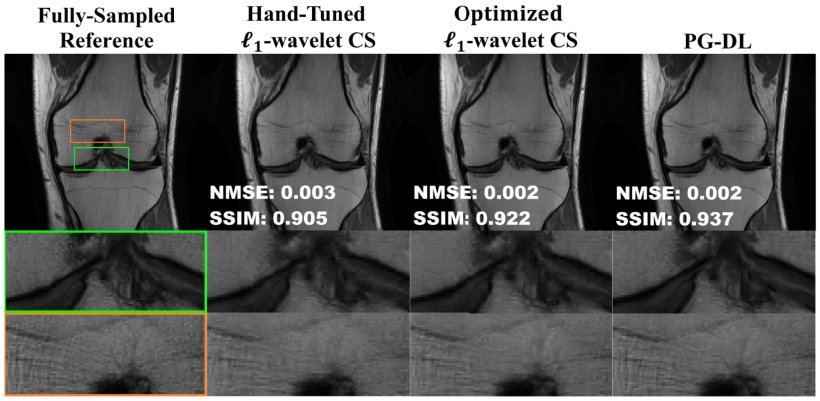

For the last decade, scientists have been making MRIs faster using a technique called compressed sensing, which uses the idea that images can be compressed into smaller sizes, akin to zipping a .jpeg on a computer. More recently, researchers have been looking into using deep learning, a type of machine learning, to speed up MRI image reconstruction. Instead of capturing every frequency during the MRI procedure, this process skips over frequencies and uses a trained machine learning algorithm to predict the results and fill in those gaps. While data indicate deep learning can be a more effective alternative than traditional compressed sensing, there are concerns with using deep learning as well. For example, having insufficient training data could create a bias in the algorithm that might cause it to misinterpret the MRI results.

There’s a lot of hype surrounding deep learning in MRIs, but maybe that gap between new and traditional methods isn’t as big as previously reported

Mehmet Akçakaya

Using a combination of modern data science tools and machine learning ideas, the U of M researchers have found a way to fine-tune the traditional compressing method to make it nearly as high-quality as deep learning, a process that would eliminate some of the concerns related to over-dependence on deep learning exclusively.

“There’s a lot of hype surrounding deep learning in MRIs, but maybe that gap between new and traditional methods isn’t as big as previously reported,” said Akçakaya. “We found that if you tune the classical methods, they can perform very well. So, maybe we should go back and look at the classical methods and see if we can get better results. There is a lot of great research surrounding deep learning as well, but we’re trying to look at both sides of the picture to see where we can find the best performance, theoretical guarantees and stability.”

Source: University of Minnesota

19.09.2022