Image source: TU Darmstadt

News • Award-winning research publication

Evaluating brain tumours with AI

One application area of artificial intelligence (AI) is in medicine, especially in medical diagnostics. For instance, scans can be analysed automatically with the help of algorithms.

An international and interdisciplinary team led by researchers from TU Darmstadt recently investigated whether AI can better evaluate images of brain tumours. For this publication, the team won the Best Paper Award at the world’s largest information systems conference ICIS, prevailing over more than 1,300 other publications.

The study provides empirical data on the influence of machine learning systems (ML systems) on human learning. It also shows how important it is for end users whether the results of machine learning methods are comprehensible and understandable. These insights are not only relevant for medical diagnoses in radiology, but for everyone who becomes a reviewer of ML output through the daily use of AI tools, such as ChatGPT.

Image source: TU Darmstadt

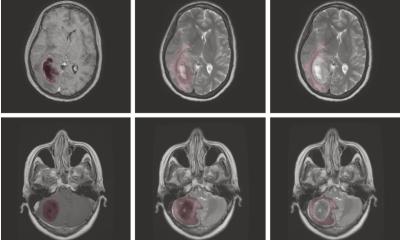

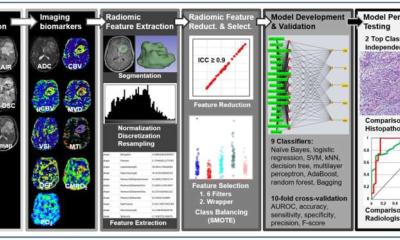

The research project, led by TU researchers Sara Ellenrieder and Professor Peter Buxmann from the Software and Digital Business Group of the Department of Law and Economics at TU Darmstadt, investigated the use of ML-based decision support systems in radiology, specifically in the manual segmentation of brain tumours in MRI images. The focus was on how radiologists can learn from these systems to improve their performance and decision-making confidence. The authors compared different performance levels of ML systems and analyzed how explaining the ML output improved the radiologists’ understanding of the results. The research aim is to find out how radiologists can benefit from these systems in the long term and use them safely.

For this purpose, the project team conducted an experiment with radiologists from various clinics. The physicians were asked to segment tumours in MRI images before and after receiving ML-based decision support. Different groups were provided with ML systems of varying performance or explainability. In addition to collecting quantitative performance data during the experiment, the researchers also gathered qualitative data through “think-aloud” protocols and subsequent interviews.

The future of human-AI collaboration lies in the development of explainable and transparent AI systems that enable end users in particular to learn from the systems and make better decisions in the long term

Peter Buxmann

In the experiment, 690 manual segmentations of brain tumours were performed by the radiologists. The results show that radiologists can learn from the information provided by high-performing ML systems. Through interaction, they improved their performance. However, the study also shows that a lack of explainability of ML output in low-performing systems can lead to a decline in performance among radiologists. Interestingly, providing explanations of the ML output not only improved the learning outcomes of the radiologists but also prevented learning false information. In fact, some physicians were even able to learn from mistakes made by low-performing, but explainable systems.

“The future of human-AI collaboration lies in the development of explainable and transparent AI systems that enable end users in particular to learn from the systems and make better decisions in the long term,” summarises Professor Peter Buxmann.

Source: TU Darmstadt

27.12.2023