Article • Key technologies

Artificial intelligence in medicine will prevail

Artificial intelligence (AI) is changing our healthcare systems. It can help us detect diseases earlier, improve patient care and reduce healthcare costs. However, there is still a lack of trust, of rules and safety regulations and of broad data pools. How can we use AI successfully in healthcare systems and what role will it play in the future?

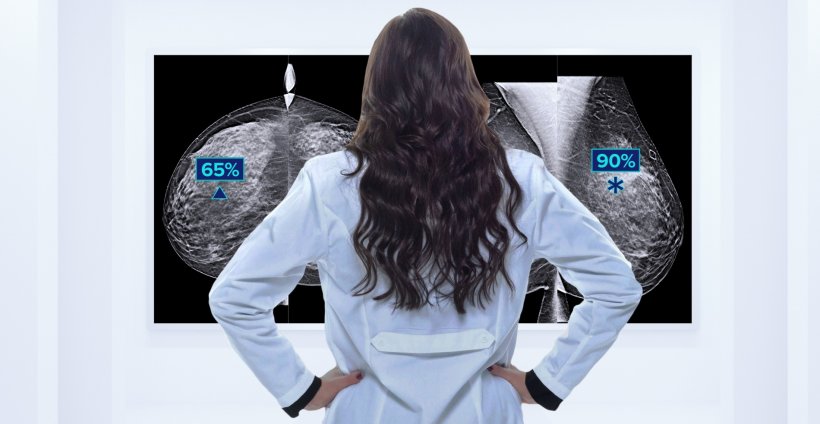

Today, artificial intelligence is already being used in areas with big data volumes, e.g. in radiology where huge amounts of image data are generated. In 2019 alone, radiology generated an estimated 675 billion gigabytes of image data which translates into 13.5 trillion cross-sectional images.1 This amount of data can never be processed manually.

Usually, two experienced and focused radiologists are needed to read a CT scan or a mammogram. AI-based applications can significantly accelerate this process by automatically preselecting the images. The radiologists then perform the crucial part: the actual reading of the preselected images. With AI, the procedure that can take physicians several hours is reduced by more than 50 percent. Many AI-based procedures have already been successfully implemented in healthcare, from the digitalisation and analysis of mammograms to the use of AI-based algorithms to assess digitalised prostate biopsies for low-grade or high-grade tumours or initial tests with digital pathology for cervical screening in cytology labs.

The human physician always has the final say

“AI can significantly simplify, accelerate and improve the physicians’ tasks. It offers enormous potential to increase accuracy, safety and trust in diagnostics and therapy,” says Dr Christian Stoeckigt, medical affairs and AI expert at Hologic. “It is crucial to note, however, that the human physician will always have the final say. AI solutions can support physicians but they will never replace them. This is where trust comes in.”

But to which extent can radiologists, pathologists and cytotechnologists trust the AI-based image analysis functions? How can we be sure that the algorithms in cancer diagnostics don’t overlook abnormal cells? “In order to continuously improve the algorithms, we focus on unusual cases,” Stoeckigt explains and offers the example of the AI-guided diagnostic functions of the Hologic digital cytology system being tested “in a customer’s lab using a known micro-carcinoma case. Within five minutes the AI algorithm had detected a small number of abnormal cells. This kind of result builds trust among physicians.”

It is equally important to gain the patients’ trust in AI-based processes since there is no acceptance of artificial intelligence in diagnostics and therapy without trust. “In my opinion, healthcare providers and clinicians have to make joint efforts to educate the patients that AI-guided diagnostics has the potential to deliver more accurate results and to accelerate treatment onset,” says the AI expert.

Wrong diagnosis: who is responsible?

Despite numerous examples of AI being able to make patient care more effective and to free capacities in the healthcare system a key question remains to be answered: who is responsible when AI delivers wrong results? Human beings – even physicians – make mistakes. But what happens when AI has contributed to the misdiagnosis? “We need an interdisciplinary task force which develops answers to these kinds of questions as well as a more holistic approach to AI systems,” Christian Stoeckigt underlines. “Cytologists, radiologists, oncologists, ethics and IT experts as well as clinicians have to work together and answer questions such as ‘What happens when an AI-guided diagnosis fails to detect a tumour?’ and ‘Are we allowed to use machine learning in life-and-death issues?’”.

Moreover, the use of AI in healthcare systems needs rules and regulations. There are already some CE-marked algorithms for use in breast and prostate cancer imaging which support prioritisation and risk stratification. But so far, only initial steps have been taken to develop appropriate rules and regulations. “These steps are positive but we need a stronger consensus,” the microbiologist and computer science expert says. “One of the major recent breakthroughs was the FDA’s change of regulations also regarding the handling of AI which offers more guidance on how to train AI systems.”

AI can help redress inequalities in healthcare

Successful use of AI-based applications in medicine requires high-quality data. Artificial intelligence is always only as good as the input data. While generating this data is a major endeavour, the effort is necessary to ensure completeness of data and allow meaningful evaluation. In short: representative data has to be used which covers all relevant characteristics of the problem, e.g. tumour contours. These characteristics, however, might not be known beforehand which increases the risk of bias. This can lead to the algorithm being overadjusted to the specific training data.

A further challenge is obtaining data from people who have limited access to healthcare. “We know for example that the breast tissue of coloured women is denser than the tissue of white women,” Stoeckigt explains, “But since statistically, coloured women have less access to healthcare services we have fewer data of coloured women. Therefore most of the AI-guided mammography applications have a ‘white’ bias. That is something we need to change.” If scientists had better access to data of these patients, the bias as well as ethnic and social inequalities in healthcare could be avoided – which in turn would benefit all patients. “AI offers the huge opportunity to remove these inequalities. We should use it,” the AI expert says and emphasizes that “The question of how we can improve patient care holistically is very important to us.”

Recommended article

Article • AI does not discriminate

Removing bias from mammography screening with deep learning

AI can help tackle inequities and bias in healthcare but it also brings partiality issues of its own, experts explained in a Hot Topic session entitled "Artificial Intelligence and Implications for Health Equity: Will AI Improve Equity or Increase Disparities?" at RSNA.

Key technology of the future

Experts agree: Already today artificial intelligence is an important component of diagnostics. It will be indispensable as ‘software assistant’. The capability to analyse complex data in real-time can be used in many areas of healthcare, be it therapy, organisation, workflow, research, education, patient self-assessment, pattern recognition in disease progression or the development of drugs. Moreover, AI can support the analysis of data for preventive measures. “It is this type of innovations that motivate us and drive healthcare,” Christian Stoeckigt concludes and adds that in these endeavours “The patient and best-possible medical care will always be front and centre”.

Reference:

10.12.2021