Source: Shutterstock/kentoh

Interview • Diagnostics & therapy

AI: Hype, hope and reality

Artificial intelligence (AI) opens up a host of new diagnostic methods and treatments. Almost daily we read about physicians, researchers or companies that are developing an AI system to identify malignant lesions or dangerous cardiac patterns, or that can personalise healthcare. ‘Currently, we are too focused on the topic,’ observes Professor Christian Johner, of the Johner Institute for Healthcare IT. At the same time he does recognize the enormous potential of the new technology. However, to leverage the technology, several challenges must be mastered and a proper framework established.

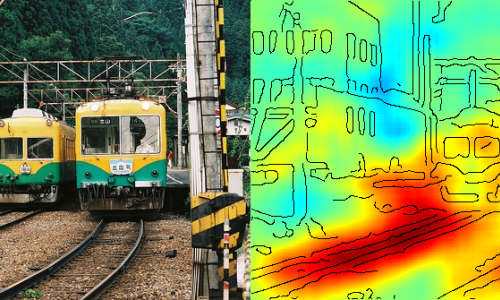

Asked which AI methods are currently being applied in healthcare, the professor said: ‘The best known methods are machine learning methods that detect patterns in existing data pools and can thus find solutions autonomously. One of these methods is Deep Learning, which uses neuronal networks. Today we use it in imaging, for example. More recently, boosting procedures such as XGBoost, which is also a machine learning approach, have been quickly gaining ground.

‘Moreover, AI is used to detect depression by analysing language and movement patterns. Other areas of application are production, selection and dosage of pharmaceuticals, error detection in patient records and signal detection in ECG to recognise arrhythmias. These are diagnostic methods that have been approved and are commercially available. Additionally, AI methods are used in therapy, for example in triage, where they support decision-making processes.’

Which types of AI innovations will have the greatest impact on healthcare?

‘Hard to tell. Any prognosis has to consider the hype curve. AI has reached peak hype in imaging. I’m sure that in other areas the hype is still to come. AI will sweep over us in waves at different points. The next focus will be on false diagnoses and treatments, which will generate considerable attention. These AI applications will become part of medical practice sooner or later, without the patient ever noticing their existence, a bit like anti-lock brakes in the car.’

Which regulations must manufacturers of AI healthcare applications meet?

‘The legislator neither can, nor wants to, adopt a separate set of laws for each medical device category. The Medical Device Directive, in the near future the Medical Device Regulation, defines the requirements. This legal framework has to be adjusted for the current AI procedures with a focus on verification and validation of the systems, stability and reproducibility as well as fitness for use – issues that are already regulated. The law demands that benefits of the systems be proven quantitatively and that they outweigh the inherent risks of the systems.

‘This regulatory framework provides good guidance. We are currently working on specific standards for AI. In cooperation with the notified bodies, such as TÜV, I drafted a guideline covering these requirements. This not only refers to the devices themselves, but also to organisations – inter alia they must define and prove staff competencies.’

What limitations does or should AI have?

‘AI should not cement bias – something that happens quite easily depending on the data pool the developers use to train their algorithms. Furthermore, AI methods must not be used when there is no clear evidence of their benefit and when the risks outweigh the benefits.

‘We also have to discuss whether we need to regulate the economic framework together with the AI methods. For instance: Should health insurers be allowed to use AI for reimbursement decisions?’

Some people fear AI will replace physicians, particularly in imaging. Could this happen?

‘There’s already a shortage of physicians and healthcare will not become easier in the future. I hope that AI will ease physicians’ workload – after all, these systems are programmed to support them in routine tasks. In some areas, AI is more powerful than the human brain, but that’s not a new experience. A truck, we all agree, is better at transporting things than a human. That’s how we should look at AI: it is a tool that can perform certain tasks better than we humans. AI will give physicians more time to actually deal with patients.

‘This means we need physicians who can use the information culled from AI in a meaningful way, in the diagnostic workup, or who can assess and implement therapy suggestions made by an AI system. These skills create an added value for human intelligence.’

Profile:

Professor Christian Johner, physician and founder/owner of the Johner Institut GmbH, a consultancy for manufacturers of medical devices, teaches software architecture, software engineering, software quality assurance and medical IT at the University of Constance, Germany. At Stanford University, USA, in 2010-11 he was a research associate contributing to the development of the ontology editor named Protégé and he taught Modelling of Biomedical Systems.

20.11.2019