News • More than just a system crash

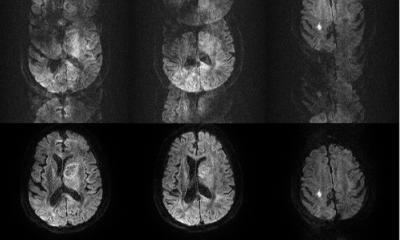

What are the limits of AI in clinical decision support systems?

Every day we hear news about Artificial Intelligence (AI) impacting more and more aspects of our lives. Stories about autonomous vehicles would probably top a current list of AI news.

With all the excitement coming with these promising AI technologies, we are also starting to understand the limitations. In a recent Las Vegas traffic accident involving an autonomous bus and a truck, the cited cause of the accident was actually human error – the truck driver hit the bus. The bus was stopped waiting for the road forward to clear, however it did not back up when the truck moved toward it. Why? Because the AI program was not trained to perform that task. The complexities involved in training AI is not obvious to many. You can train AI to distinguish cats from dogs, but without further training it will not be able to say if it is a white cat or a black cat straight out of the box. This requires additional AI training. Currently this is limitation #1: An AI system needs to be trained for a specific task and cannot magically train itself. At least for now…

Big data is the foundation of AI. The more data input to the training set – the more precise the model. Warehousing of all EMR data is a great start to feed the AI engine to understand hidden patterns and to do predictive modeling. However EMR data has characteristics that decrease the practicality of most predictive models. It is Pretest Probability which is the probability of a patient having a target disorder before a diagnostic test result is known. Data is present in the EMR when clinicians cause it to be there as they suspect a specific health problem. For example, a diagnostic troponin test is ordered because a physician suspects myocardial infarction. The physician doesn’t need an AI engine alert that myocardial infarction is suspected based on the troponin order in the EMR as the physician already knew this when they ordered the test. Additional complexity layered on top of the pretest probability characteristic of EMR data is missing data in the EMR (clinician forgot to enter the data) or delayed data in the EMR (in emergency situations clinicians are doing their job and documenting data to the EMR occurs later) and the limitations of “big EMR data” in AI training sets become obvious.

To overcome these limitations “big EMR data” could be enhanced with other data that would give additional insights. Much of this data originates from everyday activities. Sleep patterns, physical activity, eating habits, trips to the liquor store, travel destinations and many more data points could be captured by credit/debit card transactions, sensors, GPS monitors, etc. This creates significant problems related to privacy issues requiring technical and social solutions to resolve. The world today is much different than 100 years ago with less and less privacy happily traded off for the use of new technology to ease other problems/inconveniences in our lives such as the collection of data about our movements on the roads collected from automated toll collection systems accepted to eliminate long lines at manual toll booths.

Do these limitations mean that AI has no place in healthcare? No. AI can help with the automation of routine tasks, however AI doesn't yet have the human brain capacity to deal with the unknown or the unpredictable.

Source: HIMSS/Vitaly Herasevich

22.11.2017