Research

The future for big data in medicine

‘In IT we often casually say that Big Data is exactly what we can’t do yet,’ said Professor Christoph Meinel, President of Germany’s Hasso-Plattner-Institute, ruefully. We asked the computer science expert about the potential of big data in medicine and medical research.

‘Big Data are huge volumes of data of very different heterogeneity, origin, quality and size – and it’s exactly these characteristics that pose a big challenge for evaluation, analysis and calculation because we are better versed at handling more uniformly structured data.’

Asked to about the term Big Data he pointed out that there are many examples, including human genome data, data in hospital information systems, cancer registers, clinical studies, medical sensor data, image data, acoustic data and ultrasound data, as well as medical publications. ‘We are now trying to intelligently link these data sources to facilitate conclusions that can advance our medical research and therefore the treatment of diseases.’

‘For example, the success rate of radio- and chemotherapy in cancer treatment is below 25%, meaning 75% of patients undergo agonising treatment for nothing. If the likelihood of determining the effectiveness of treatment based on findings of a patient’s respective genetic or molecular structure was higher, we could exclude certain types of treatment right from the start because they are not appropriate, and we could spare the patient this torturous treatment. Previously this required analyses that could sometimes take several months. Now, with the In-Memory-Technology, which we developed together with SAP, we can reduce the time these evaluations take to mere seconds.

‘The first product based on this technology is the SAP HANA Database. This type of database is ten thousand times faster than traditional ones. Why? The RAM of computers is becoming ever cheaper, and new computer architecture is now possible with huge RAMs. Entire databases can be stored in the RAM, meaning that data can be analysed immediately without time intensive transport of data from external data storage. This means the factor can be calculated ten thousand times faster and data from very different sources can be linked in real time.’

Are those data sets to be analysed actually Big Data?

‘Yes, we can now access these different “pots” of data stored in different silos and look for connections with the help of modern procedures, such as ‘Deep Machine Learning’ and neuronal networks, or respectively we can detect presumed links. This can lead to the discovery of connections that we hadn’t even imagined. It also means we are turning our backs on the natural scientific principle, i.e. the establishing of a theory and proving it with experiments. Now we throw large amounts of data into a pot and leave it to high performance computers to look for patterns and connections, often with surprising results. For example, if the analysis shows that many people suffering a certain illness benefit from one particular medication out of a number of comparable drugs, then a doctor can most probably help his patient by prescribing this medication – even though he may not understand exactly why.’

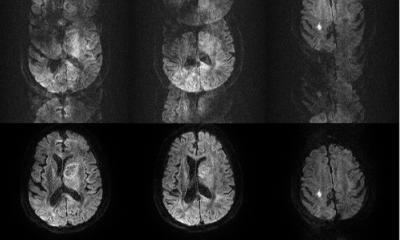

Could texts and images be correlated in the context of Big Data Analyses?

‘This seems possible indeed; Ii is about attempting to bring images and texts together, i.e. mechanical recognition of semantics. Texts are now quite easy to understand, but how can I detect something in a video? You can describe the video with text, but we are looking for procedures that automatically recognise what happens in a video at what point in time, to make retrieval of this information possible at a later stage.’

If this goes well, where will we be with Big Data in five years’ time? What will we be able to find out then which we can’t do now?

‘We will certainly know a lot more about the structural design of humans, i.e. gene analysis, protein analysis, molecular analysis, and also about processes in the body, what triggers what and how. This will result in improved opportunities to diagnose individual constitutions and in more appropriate treatment of diseases, i.e. personalised medicine. However, this will also require quite large financial investments. For the pharmaceutical industry this means the development of individualised medications, which will revolutionise the industry - bearing in mind that the strategy, so far, has been all about developing blockbuster drugs that help as many people as possible.

Data protection

‘This is a key topic. Data obviously has to be protected, particularly where it is possible to draw conclusions as to personal information. However, neither the German data protection law, nor the proposed European data protection regulation, meet the level of data protection required in the age of Big Data. Historically, the philosophical principle has been one of thriftiness with data. However, if we say that Big Data is the shape of the future this will be at odds with the principle of data thriftiness.

‘Additionally, there is the issue that data collection is only allowed for a previously defined, specific purpose, which may be even more problematic because it contradicts the Big Data approach where we initially just have a look and see what we can find, and then make the best of it without prior knowledge of the purpose. Therefore, we need at least the opportunity for special dispensation within the law to account for this, and the definition of a procedure on how to achieve special dispensation - otherwise Europe will turn into a digital colony.’

PROFILE:

A maths and computer sciences graduate from Humboldt University, Berlin, Christoph Meinel is president and CEO of the Hasso-Plattner-Institute, and professor for Internet Technologies and Systems at the University of Potsdam (Germany). He is a member of acatech, the national German academy of science and engineering, Chairman of the German IPv6 council, and of HPI-Stanford Design Thinking Research Programme. He heads the steering committee of HPI Future SOC Lab, and serves on various advisory boards, e.g. SAP. His research focuses on IT and systems, and Design Thinking research.

10.09.2015