News • Healtchare devices

Hearing aids could read lips through masks

A new system capable of reading lips with remarkable accuracy even when speakers are wearing face masks could help create a new generation of hearing aids.

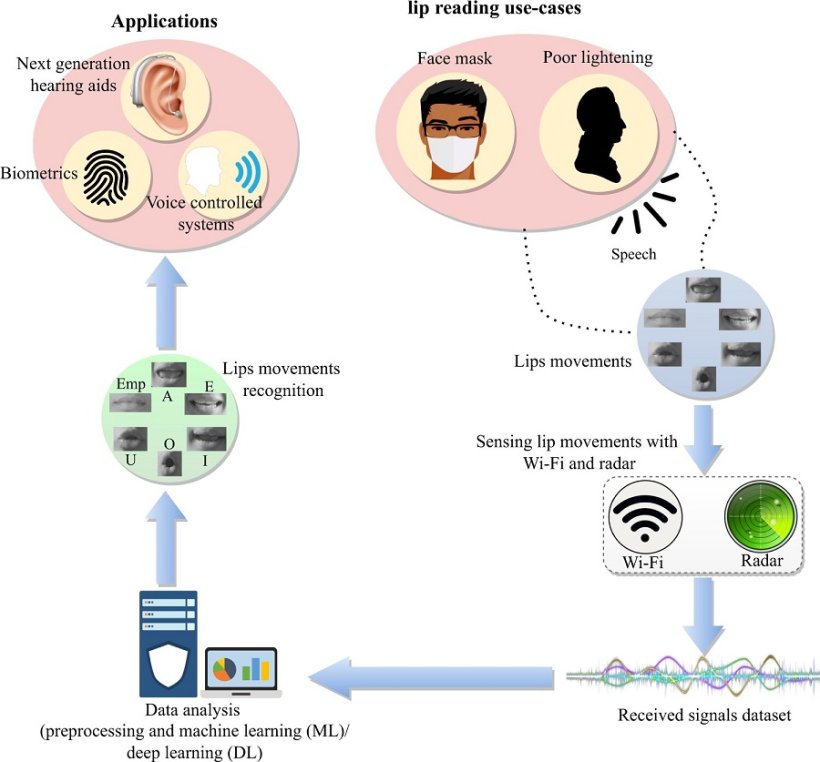

An international team of engineers and computing scientists developed the technology, which pairs radio-frequency sensing with Artificial intelligence for the first time to identify lip movements. The system, when integrated with conventional hearing aid technology, could help tackle the "cocktail party effect," a common shortcoming of traditional hearing aids.

Currently, hearing aids assist hearing-impaired people by amplifying all ambient sounds around them, which can be helpful in many aspects of everyday life. However, in noisy situations such as cocktail parties, hearing aids' broad spectrum of amplification can make it difficult for users to focus on specific sounds, like conversation with a particular person.

One potential solution to the cocktail party effect is to make "smart" hearing aids, which combine conventional audio amplification with a second device to collect additional data for improved performance.

While other researchers have had success in using cameras to aid with lip reading, collecting video footage of people without their explicit consent raises concerns for individual privacy. Cameras are also unable to read lips through masks, an everyday challenge for people who wear face coverings for cultural or religious purposes and a broader issue in the age of COVID-19.

In a new paper published in Nature Communications, the University of Glasgow-led team outline how they set out to harness cutting-edge sensing technology to read lips. Their system preserves privacy by collecting only radio-frequency data, with no accompanying video footage.

To develop the system, the researchers asked male and female volunteers to repeat the five vowel sounds (A, E, I, O, and U) first while unmasked and then while wearing a surgical mask.

As the volunteers repeated the vowel sounds, their faces were scanned using radio-frequency signals from both a dedicated radar sensor and a wifi transmitter. Their faces were also scanned while their lips remained still.

Then, the 3,600 samples of data collected during the scans was used to "teach" machine learning and deep learning algorithms how to recognize the characteristic lip and mouth movements associated with each vowel sound.

Because the radio-frequency signals can easily pass through the volunteers' masks, the algorithms could also learn to read masked users' vowel formation.

The system proved to be capable of correctly reading the volunteers' lips most of the time. Wifi data was correctly interpreted by the learning algorithms up to 95% of the time for unmasked lips, and 80% for masked. Meanwhile, the radar data was interpreted correctly up to 91% without a mask, and 83% of the time with a mask.

Dr. Qammer Abbasi, of the University of Glasgow's James Watt School of Engineering, is the paper's lead author. He said, "Around 5% of the world's population—about 430 million people—have some kind of hearing impairment.

"Hearing aids have provided transformative benefits for many hearing-impaired people. A new generation of technology which collects a wide spectrum of data to augment and enhance the amplification of sound could be another major step in improving hearing-impaired people's quality of life.

"With this research, we have shown that radio-frequency signals can be used to accurately read vowel sounds on people's lips, even when their mouths are covered. While the results of lip-reading with radar signals are slightly more accurate, the Wi-Fi signals also demonstrated impressive accuracy.

"Given the ubiquity and affordability of Wi-Fi technologies, the results are highly encouraging which suggests that this technique has value both as a standalone technology and as a component in future multimodal hearing aids."

Source: University of Glasgow

11.09.2022