Exploding data gives new views of disease

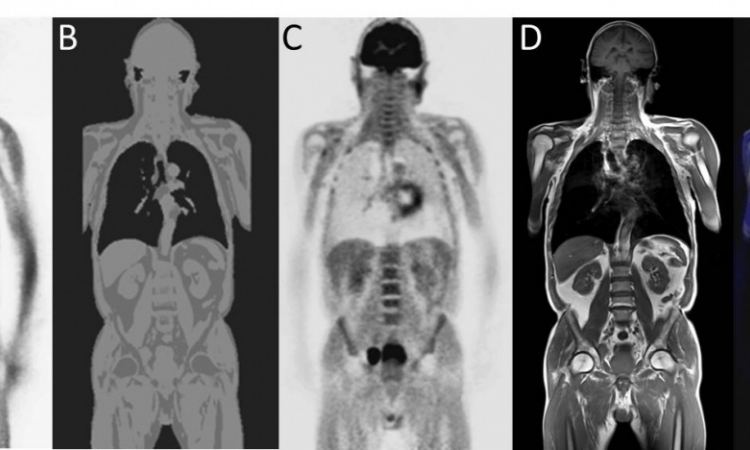

It is said a picture is worth 1,000 words. Advanced medical imaging, such as 3D views of the heart or brain have certainly proven the value of this statement by advancing our understanding of the complex biological structures and processes of disease.

So what happens if we explode a picture 100 times further? Is it worth 100 times more in aiding our understanding of disease. Absolutely, according to Bert ter Haar Romeny, professor of Biomedical Image Analysis (BMIA) at the Eindhoven University of Technology. It is what your own brain is doing every second, he said, taking each incoming image and creating a much larger image, stacks of images each dedicated to a different orientation, each adding a different perspective for your understanding of what you are looking at. Ter Haar Romeny was one of five speakers presenting at the IMAGINE session organized by the European Institute for Biomedical Imaging Research (EIBIR) for the European Congress of Radiology (ECR).

Friday's session was dedicated to the latest technological developments in Quantitative Image Analysis. Put another way, the presentation of recent work by research institutes, university groups and companies shows how far upstream of today's routine clinical practice new understandings can be mined from the massive data being generated by sophisticated medical imaging modalities. This research work begins where radiologists typically stop and file the data sets after reading.

The BMIA group in Eindhoven, for example, opens a complex toolbox to explore intricate brain fibers from high-volume diffusion MR images. This work is refining treatment for epilepsy and deep brain stimulation to the point where the Eindhoven group is asking whether we need to continue to plunge invasive needles into a target area of the brain when we now can see, and perhaps stimulate the very fine circuits of the brain itself.

Using what he called "brain-inspired computing," Ter Haar Romeny showed how algorithms for quantitative analysis can be applied to understanding motion in three dimensions and the deformation of ventricular contractions to allow a non-invasive assessment of cardiac infarcts.

Meanwhile files from PACS all over Europe are being mined by a group at the Computational Image Analysis and Radiology Lab (CIR) at the Medical University of Vienna. "Radiology workstations are very limited in their ability to find similar cases even within the same hospital," said Rene Donner from CIR-Vienna. Meta tags and key word searches are primitive compared to the potential to capture data from the images themselves to give radiologists a faster and richer tool for comparing cases during interpretation. An image being interpreted by a radiologist could automatically find similar images and retrieve cases with relevant pathologies, he explained. The database will also include text extracted from medical journals, case reports and even unstructured and multi-lingual text to offer the radiologists support in interpretation.

09.03.2013