© Fabian Vogel / TUM

News • Medical workflow assistance

AI and robotics support for ultrasound imaging

Ultrasound became established in medicine 60 years ago. The first remotely controllable ultrasound machine appeared 20 years ago. The next leap forward, towards an autonomous ultrasound system, is now imminent.

"We want to create a robotic system with artificial intelligence that knows the physics of ultrasound, analyses human physiology and anatomy and helps doctors decide what to do," says Prof. Nassir Navab from the Technical University of Munich (TUM), describing his vision. The Head of the Chair of Computer Aided Procedures & Augmented Reality at TUM is one of the few professors in the world to bring together researchers in artificial intelligence, computer vision, medicine and robotics in his laboratory. In the first systems, the researchers show how these technologies can be used in medical practices and operating theaters.

The research groups led by Prof. Navab published their insights on the technology in the journals Annual Review of Control, Robotics, and Autonomous Systems and Medical Image Analysis.

To consolidate trust [of patients towards the robot], the system first demonstrates its capabilities in simple, non-critical interactions, such as a symbolic high five

Felix Dülmer

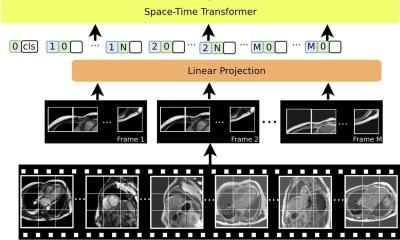

A newly developed robotic system now makes it possible to examine patients with an ultrasound device without the presence of a doctor. The ultrasound probe is attached to a robotic arm that is placed on a patient's forearm or abdomen, for example, and autonomously examines these regions. The system independently displays vessels from inside the body in 3D, visualizes physiological parameters such as blood flow velocity and thus relieves doctors of routine tasks. The system also recognizes anomalies, such as constriction of vessels. This means that doctors already have the results available and can concentrate more on patient care and counselling.

In contrast to routine examinations, where ultrasound scans can already be performed autonomously and standardized for research purposes, the autonomous system can be used in the operating theater. For spinal operations, the researchers working with Prof. Navab rely on the concept of shared control. Surgeons can use ultrasound themselves in the conventional way, but can also be assisted autonomously in order to have their hands free. For example, when injecting a vertebral joint, the system can deliver images of the region without disrupting the operation. It is also able to check the images using machine learning to find anomalies indicating vertebral fractures.

Autonomous robotic ultrasound systems offer many benefits:

- Ultrasound images in 3D: Examination results are generally produced in 3D, rather than the usual 2D. This means that doctors no longer have to visualize 3D images. In addition, connections become visible that are hard to spot in 2D.

- Comparability of data: The system can access all examinations over a period of years and even compare results from different patients. It runs a standard routing to record individual regions of the body, examines them for important issues and makes data easy to compare. Is a vessel constricted? Has it changed since the last examination?

- Health scan without medical experts: In the future, cabins in medical centers or pharmacies equipped with these systems would be sufficient for autonomous examinations. Because this would be possible even without specialized medical personnel in attendance, the innovative technology could also be used at remote locations, for example on ships, in space or on remote islands.

Before the robotic arms with the ultrasound heads start moving, Prof. Navab believes that "confidence-building measures" are important. Patients need to familiarize themselves with the robotic system. To this end, his team is researching a human-machine interaction to guarantee a relaxed and safe environment. Getting to know the robot, for example, begins with a clear animation showing the steps in the examination and which movements the robot will make. After this introduction, patients will know what to expect.

"To further consolidate trust, the system first demonstrates its capabilities in simple, non-critical interactions, such as a symbolic high five," explains PhD student Felix Dülmer. These measures are intended to make it clear that the system has an understanding of the environment and can react to movement – and that the equipment therefore poses no danger - for example when the robot applies gel to the abdominal wall with its arm and moves the ultrasound probe back and forth on standardized paths and with the specified pressure.

TUM Professor Navab assumes that people will quickly accept the technology: "People are already measuring their pulse, body temperature and blood pressure with their smartwatch or other digital applications," explains Prof. Navab. "They will certainly be open to having ultrasound examinations carried out with the help of robotic systems."

Source: Technical University of Munich

31.05.2024