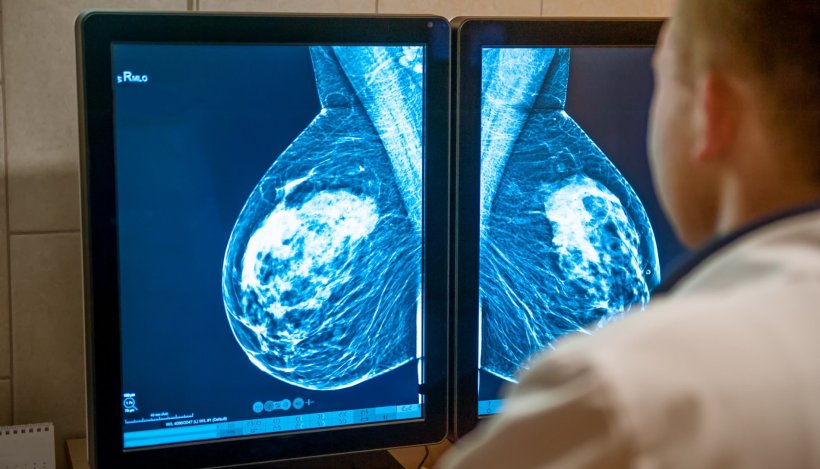

Image source: Adobe Stock/okrasiuk

News • Mammogram analysis

AI accurately classifies breast density

An artificial intelligence (AI) tool can accurately and consistently classify breast density on mammograms, according to a new study.

Breast density reflects the amount of fibroglandular tissue in the breast commonly seen on mammograms. High breast density is an independent breast cancer risk factor, and its masking effect of underlying lesions reduces the sensitivity of mammography. Consequently, many U.S. states have laws requiring that women with dense breasts be notified after a mammogram, so that they can choose to undergo supplementary tests to improve cancer detection.

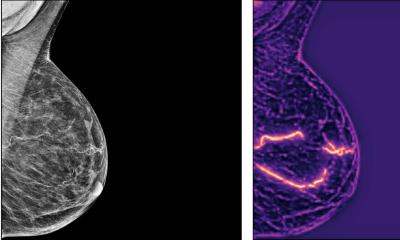

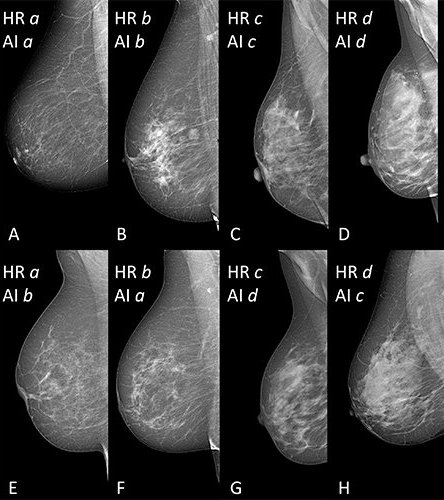

In clinical practice, breast density is visually assessed on two-view mammograms, most commonly with the American College of Radiology Breast Imaging-Reporting and Data System (BI-RADS) four-category scale, ranging from Category A for almost entirely fatty breasts to Category D for extremely dense. The system has limitations, as visual classification is prone to inter- and intra-observer variability. To overcome this variability, researchers in Italy developed software for breast density classification based on deep learning with convolutional neural networks, a sophisticated type of AI able to discern subtle patterns in images beyond the capabilities of the human eye. The researchers trained the software, known as TRACE4BDensity, under the supervision of seven experienced radiologists who independently visually assessed 760 mammographic images.

The results were published in Radiology: Artificial Intelligence.

Image source: Magni et al, Radiology: Artificial Intelligence; @RSNA 2022

External validation of the tool was performed by the three radiologists closest to the consensus on a dataset of 384 mammographic images obtained from a different center.

TRACE4BDensity showed 89% accuracy in distinguishing between low density (BI-RADS categories A and B) and high density (BI-RADS categories C and D) breast tissue, with an agreement of 90% between the tool and the three readers. All disagreements were in adjacent BI-RADS categories. “The particular value of this tool is the possibility to overcome the suboptimal reproducibility of visual human density classification that limits its practical usability,” said study co-author Sergio Papa, MD, from the Centro Diagnostico Italiano in Milan. “To have a robust tool that proposes the density assignment in a standardized fashion may help a lot in decision making. Such a tool would be particularly valuable, the researchers said, as breast cancer screening becomes more personalized, with density assessment accounting for one important factor in risk stratification.”

“A tool such as TRACE4BDensity can help us advise women with dense breasts to have, after a negative mammogram, supplemental screening with ultrasound, MRI or contrast-enhanced mammography,” said study co-author Francesco Sardanelli, MD, from the Istituto di Ricovero e Cura a Carattere Scientifico Policlinico San Donato in San Donato, Italy.

The researchers plan additional studies to better understand the full capabilities of the software. “We would like to further assess the AI tool TRACE4BDensity, particularly in countries where regulations on women density is not active, by evaluating the usefulness of such tool for radiologists and patients,” said study co-author Christian Salvatore, PhD, senior researcher, Scuola Universitaria Superiore Pavia, and co-founder and chief executive officer of DeepTrace Technologies.

Source: Radiological Society of North America

18.03.2022