Article • Imaging informatics meeting

SIIM 2020: Glancing back at 40 years and ahead to the future

40 years ago, anticipating the huge impact of computers in radiology, a group of visionaries formed the Radiology Information System Consortium (RISC). In 1989, RISC created the Society for Computer Applications (SCAR) to promote computer applications in digital imaging. Those organisations became the Society for Imaging Informatics in Medicine (SIIM). At SIIM 2020, a virtual meeting, experts honoured those visions and predicted future imaging innovations.

Report: Cynthia E. Keen

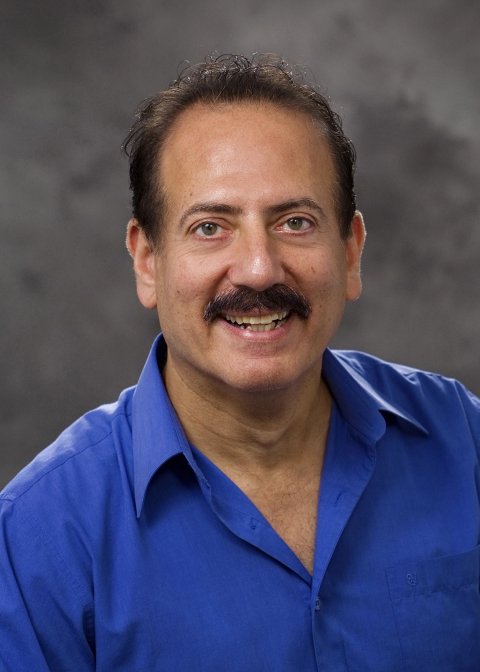

Eliot Siegel MD, Professor of Diagnostic Radiology and Nuclear Medicine and Vice Chair of Information Systems at the University of Maryland Medical Center, Baltimore, was the first radiologist to embrace digital imaging fully – he created the world’s first 100% filmless radiology department.

In 1993, the new Baltimore Veterans Affairs Medical System had the first hospital-wide picture archive and communications system (PACS), becoming a cutting-edge imaging informatics laboratory. Siegel still heads its radiology department and all radiology departments of hospitals in the Veterans Affairs Maryland Healthcare System, and also works with software developer RadLogics on an AI app to detect Covid-19 on thoracic CT studies.

Like the session moderator, Paul Nagy PhD, Deputy Director of Johns Hopkins Medicine Technology Innovation Center, Siegel is sceptical as well as enthusiastic about AI’s future aid to radiologists. Nagy believed PACS would proliferate in the 1990s, early by 10-15 years. He cited the many technology enablers – the proliferation of CT scanners; managing hundreds of exam images, and advances in digital storage – with PACS acceptance worldwide.

Figuring out what’s wrong with an image is much more difficult for a computer vision algorithm than figuring out what it has been trained to look for in the image

Eliot Siegel

He believes AI will not replace radiologists; rather, within 10-13 years, it could interpret and generate reports for most chest radiographs and mammograms and identify exams needing attention and radiologist review. Diagnostic AI apps, he added, are created from extensive training on image databases, but he raised questions: How does one verify that image databases are comprehensive enough? How can AI app accuracy be validated to meet any situation? What is the gold standard for AI app performance and who defines that? Just as apps developed for conventional 2-D mammography became obsolete due to mammography tomosynthesis, new imaging advances will de-value an AI diagnostic/interpretive app.

No matter how good, AI apps, Siegle pointed out, have inherent limitations. ‘Figuring out what’s wrong with an image is much more difficult for a computer vision algorithm than figuring out what it has been trained to look for in the image. A radiologist evaluates the entire image in a clinical context.

‘One of the biggest challenges with AI software is that they perform impressively in research manuscripts, demonstrate a transparent and logical and exemplary performance for FDA clearance, but then perform substantially less well in actual clinical practice,’ Siegel observed. ‘Different radiology imaging equipment and different patient populations result in differences in images from the initial training and validation sets that do not distract humans, but can change diagnostic accuracy substantially. Radiologists “recalibrate” in new situations, but AI software does not have this ability, due to the FDA clearance limitations. If the FDA would allow local/regional modifications to help AI software to fine tune itself over time, this would be a very significant AI advance that could accelerate adoption.’

Recommended article

Article • Algorithmic challenges

Radiographers urge caution when working with AI

The Artificial Intelligence (AI) landscape confronting the radiographer profession will be outlined in sessions at ECR 2020, with leading practitioners urging the need for an evidence-based approach in order to deliver a safe and effective service for patients. The session, under the broad heading of “Artificial intelligence and the radiographer profession”, aims to discuss AI within the…

Siegel predicts that the next AI ‘killer app’ will be the use of Deep Learning by modality vendors for image acquisition improvements, such as contrast and spatial resolution, or replace or enhance iterative reconstruction. This type of app could also offer the potential for reductions in overall image reconstruction time, radiation dose, and/or the amount of intravenous contrast needed. AI apps will help radiologists’ efficiency and productivity, Siegel believes. The development of AI clinical ‘suites’ of applications that focus on a particular disease or body area could combine the finds of an AI diagnostic app with clinical databases that suggest specific diagnoses, recommend additional studies, and suggest different treatment possibilities.

Combinations of apps that work cooperatively on a single suite could also greatly benefit radiologists. Siegel suggested, hypothetically, a renal disease suite could combine AI applications that segment the kidneys, search for renal masses, evaluate dynamic contrast enhancement to evaluate function over time, measure renal size, and characterise renal cysts. Other AI apps would then correlate the findings with a patient’s clinical status, such as hypertension, renal function, urinary protein levels, and medication history, to advise therapies.

We will see an emergence of reliable methods to share imaging data across service providers

Don Dennison

Within the USA, he explained, viable business models do not exist to support image transfer interoperability among healthcare systems HIEs, with respect to costs, funding, privacy and system security issues. Additionally, issues are substantial relating to efficiently adding transferred exams and reports to recipient PACS and radiology information systems (RIS). New accession numbers and some unique identifiers are needed, procedure descriptions may need reconciliation, and DICOM attributes in images may contain values not wanted in recipients’ records, and series descriptions may need modification to match the recipient’s norm.

In the USA, per-use commercial image transfer systems and patient-controlled image repositories have existed for a decade but not proliferated, largely due to funding. ‘Clinicians want immediate access to patients’ images. Patients agree. But only when healthcare reimbursement models give priority to utilising existing and relevant images from prior exams – and agree to fund access fees – could cloud-based image transfer proliferate. This will happen, and we will see an emergence of reliable methods to share imaging data across service providers, such as independent imaging centers and regional HIEs.’

AI tools, he suggested, could automate reconciliation of externally acquired image data, such as identifying a patient’s medical record number, creating an order with local accession number and study instance unique identifier, correlating and renaming the exam type, technique, and procedure, and cleaning up or purging unnecessary metadata.

Image sharing and interoperability across healthcare enterprises

Some believe the impact of cloud technology will make DICOM CD/DVDs obsolete as the primary means of exam transfer. Don Dennison, a consultant and specialist in image interoperability, disagrees. ‘The CD will die a death of 1,000 stabs and take a very long time before being phased out, because it’s so easy to export images onto this still universally-used media.’

DICOM CD/DVDs are inexpensive, portable, and have a standard format for viewing. They are retained easily by patients and are used worldwide. Disadvantages include password protection, or lack thereof, and they may not contain the associated radiology reports.

Cloud-based imaging data exchange between healthcare systems, or by accessing a patient or organisation administered image repository, offers secure, reliable image transfer. This well-established technology flourishes within individual systems as health information exchanges (HIE), and in countries with single-payer, publicly-funded health models. ‘The regional Diagnostic Imaging Repositories (DIRs) in Canada are an excellent example of the latter,’ he said. ‘It’s in everyone’s interest to reduce waste and risk associated with lack of image access. However, automated sharing among most DIRs is still extremely limited.’

Diagnostic workstations

Professor Elizabeth A Krupinski PhD, Vice Chair for Research in the Radiology and Imaging Sciences Department at Emory University, Atlanta, Georgia, is expert on performance, productivity, and efficiency issues relating to diagnostic workstations and their use by radiologists. Her research has improved workstation ergonomics and optimised diagnostic image and data displays. Although, in 30 years, diagnostic workstations and workspaces have evolved, Krupinski doesn’t expect dramatic changes in the near term in some aspects, but does believe there will be continuing change to improve computer interfaces to make manipulating image display and command functionality easier.

Some things are classic – with form and functionality a legacy from early computers, e.g. the computer mouse, Krupinski believes. ‘Radiologists are used to the mouse, whether simple or complex in design. It’s inexpensive and a very ergonomic tool, making interaction with computers possibly better than with more high-tech devices. The mouse will be here perhaps forever. But it’s a habit for me,’ she conceded; new generations of radiologists have grown up interacting with smartphones and tablets, using swiping and tapping commands.

As always, manufacturers will spearhead changes, based on demand and competitive factors, she said. ‘Either smartphones and tablet devices will need to evolve to become practical for routine daily image interpretation, or workstation interfaces will become more like smartphones and tablets.’ Without financial motivation, she added, this won’t happen soon.

05.08.2020