Interview • ‘Dr’ Watson

Big data takes a big brain

Agfa HealthCare aims to tap the IBM-Watson super-computer to bring big data analytics to medical imaging. To find out how Watson can be harnessed to help deliver information useful for patient care, European Hospital spoke with James Jay, the Global Vice President and General Manager for Imaging IT at Agfa Healthcare.

Interview: John Brosky

Agfa’s Enterprise Imaging platform becomes a great vehicle to deliver Watson’s powerful capabilities as part of their daily work.

James Jay

Because no one can know everything, radiologists often consult with colleagues to deliver a diagnosis. Soon they may be able to call upon the world’s smartest computer, a learning machine that reads everything and forgets nothing. This summer Agfa HealthCare announced it had joined the Watson Health Medical Imaging Collaborative built around IBM’s Watson, the famously brainy super-computer that regularly defeats grand masters at chess, wins every television game show contest and has even created 65 new gourmet recipes.

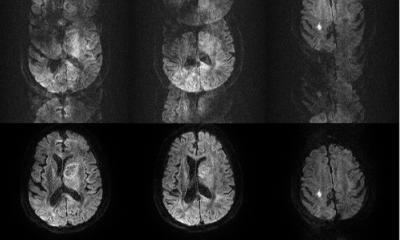

Turning from fun and games, Watson entered the medical field by consuming libraries and journals and one year ago digested 315 billion medical imaging data points and 90 million unique records following IBM’s acquisition of Merge Healthcare’s database. Watson today is able to read medical images, which IBM estimates account for 90% of all medical data today.

Why is Agfa investing in the Watson Health Medical Imaging Collaborative?

James Jay: ‘It’s about us trying to find a way to deliver the most powerful analytics capabilities in the world to users on our Enterprise Imaging platform. So they can tap into Watson as part of the work they already do today. That’s why IBM asked us to be part of this. They realise that, as powerful as Watson may be, the last thing healthcare professionals want is to see yet another computer added to their office! They already have electronic medical record systems, medication administration systems, imaging systems such as PACS.

‘Agfa’s Enterprise Imaging platform becomes a great vehicle to deliver Watson’s powerful capabilities as part of their daily work.’

Coming down from the general idea of cloud computing, what can Watson do to help healthcare professionals?

‘One of the challenges with Watson is that it is capable of doing anything. The difficult question is to ask what do we want it to do. Ultimately, we want to approach hospitals to say, specifically, that we can help with their work in a specific area, built on specific real-world use cases. An example would be in lung cancer. Watson studied lung cancer at Memorial Sloan–Kettering Cancer Center where it not only integrated lung images; it also learned the specific pathologies around lung cancer. In a hospital we can help improve the analysis of the pixel data in the CT studies of the lung, to run comparative analytics, perhaps to identify secondary findings. For example, a clinical team may be looking at the liver while a Watson-capable system might see a reason to also go look at images of the patient’s lungs and find a nodule. The radiologist would not have spotted this, because the doctor’s request was to look at the liver.

There is software that can detect cancer. What is the difference between medical software analysis and Watson analytics?

‘You can teach software to recognise specific patterns in a medical image, but then that software will only be capable of looking for the specific patterns that have been coded. Watson is adaptive. It is a learning platform that uses data sets to make itself smarter when it looks at new images in the future. Watson reaches into a massive reference database with an enormous machine-learning capability. It can tap health records, radiology or pathology reports, doctors’ notes, medical journals, clinical care guidelines and published outcomes studies all at once. There are amazing things that can be done with a machine that learns!’

Agfa believes that Watson can help find ‘invisible, unstructured imaging data’. Where is this invisible imaging data?

‘There are several levels of information in every medical image, the patient information linked to the image, which is structured but only used for indexing the image. There is a radiology report that is not structured. And then there is the pixel information contained in the image itself.

‘The patient information can be used for much more than indexing. Data taken only from the image, without going to the patient medical record, includes the patient’s age, sex, height and weight, for example. This can be used to create comparative perspectives, to link that patient to a specific patient population and enrich demographic information.

‘The unstructured radiology report can be ingested by a learning machine using textual tools and turned into a wealth of information that can be mined. And, finally, there is an underlying analysis of the pixel data. The image the radiologist sees has been examined for a specific request, but is not examined in conjunction with a wider comparative study that may show the pixel pattern represent something different when analysed from this different perspective.’

Does Agfa want to become the interface between man and machine, between doctors and Watson?

‘To deliver big data analytics to clinicians in the future, you have to already be in front of them today, part of their daily work. They are not going to add more and more systems to look at; they want to do the opposite, to work on fewer and fewer systems.

‘Right now we have the attention of more than 10,000 users to whom we can deliver advanced capabilities through our Enterprise Imaging platform. This becomes a vehicle to aggregate the capabilities of very smart people out there developing analytics and other services helpful for healthcare professionals, our users.

‘So, yes, Agfa will become the aggregator of analytics for physicians and clinicians through the interface on the Enterprise Imaging platform.’

Profile:

A vice president with Agfa Healthcare James Jay is the General Manager for Imaging IT and serves as a member of the company’s Board of Directors for its USA operations. He is also the holder of five patents for the functions behind displaying and comparing image studies, as well as enhancing workflow. With deep experience in developing medical device software and working in a regulated environment, James is actively involved in numerous healthcare IT associations and organisations.

06.11.2016