Image source: Unsplash/Rodion Kutsaev

Article • Robots, chatbots and more

Conversational user interfaces: a new access to healthcare?

The deployment of conversational user interfaces (CUI) or chatbots to healthcare has started gaining momentum. It is fueled by the rising power of artificial intelligence (AI), the increasing popularity of mobile health applications as well as the desire for engagement and usability. The past few years have seen a myriad of innovations in chatbots that can automate and engage in human-like conversations with a user.

Report: Cornelia Wels-Maug

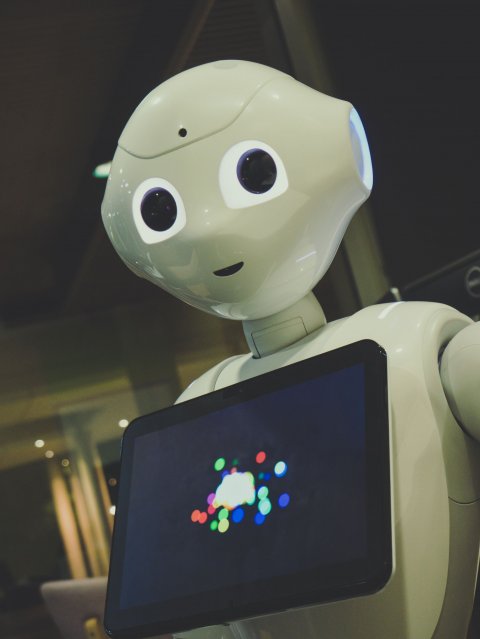

”Pepper” is potentially one of the most charming specimen in the field, showcasing the use of CUIs in a robot. Its parent company, Softbank, describes its purpose like this: “Pepper is a robot designed for people. Built to connect with them, assist them, and share knowledge with them”.

This year’s virtual Medica explored in its “Health IT Forum: Conversational User Interfaces - Tech Talk” some aspects of CUIs in healthcare. The moderator, Dr. Nana Bit-Avragim, Founder iHospital InnoaLab, asked the panellists provocatively if CUIs will be in future the primary channel of communication between patients and healthcare providers?

How does a CUI work?

CUIs are programmes designed to communicate with a user and provide or collect information by mimicking interactions with humans. They are used in connection with a computer and other smart devices, but mainly via smartphone apps, using free text as the main input and output modality. The most advanced solutions employ AI techniques like Natural Language Programming and Machine Learning (ML) to help the chatbot understand context, intent and a user’s personal preferences. Applying ML allows the system to learn from data as and when available and improve its performance.

The chatbot technology leads a user through a predefined conversation tree, with the dialogues addressing specific tasks. By using artificial human responses, CUIs carry the promise to drive the development of personalized healthcare by shaping end users’ behaviours and helping them to adopt better health practices. They open up opportunities for patients and caregivers alike, stresses Bit-Avragim: For patients, CUIs facilitate access to diagnostics and treatment when a human doctor is not available or not needed and allow health professionals to do their daily work more effectively by interacting in a simplified manner with machines and software using AI-based algorithms.

Recommended article

Article • Amazon’s AI-powered personal voice assistant

‘Alexa’ joins the NHS

It’s a world’s first. The UK’s National Health Service (NHS) is collaborating with Amazon to provide reliable health information from the service’s website through voice-assisted technology. In a speech announcing the service, Matt Hancock, Secretary of State for Health and Social Care, addressed the need for dependable information.

Real-life CUI use cases

There are already several mobile health apps on the market that integrate CUIs. The most common areas of application seem to be for treatment and monitoring, healthcare service support, and patient education: CUIs can assist patients with booking appointments for treatments or help hospitals to check in on patients’ health and monitor their vitals regularly after their discharge from a clinic. There are also CUI-based symptom checkers like Babylon Health or chatbots that aim at supporting changes in behaviour like X2AI, a “mental health chatbot who delivers emotional wellness coping strategies”.

Image source: Unsplash/Owen Beard

Panellist Roman Rittweger, CEO & Founder Ottonova, a private digital health insurance, explains that Ottonova combines digital services and premium insurance tariffs with personal consultation using CUIs. “This is our answer for the requirements of today’s digital generation”, says Rittweger. He finds “CUIs are the ideal tool to explain the complicated world of health insurance to our customers” and to nudge them towards a healthier life. Rittweger is convinced that CUIs are “the future of interaction between health insurers and customers.”

Another discussant at the Medica Forum, Roberto Iannone, CEO & Founder Zoundream, sees a huge potential of this technology for support. His company Zoundream applies AI and sound recognition to the domain of new-borns cry to translate babies’ cries sounds ”into their needs, emotions and physical status” to support young parents. “Our technology can be integrated into a baby monitor. CUIs are for us a little more than you might think initially”, shares Iannone, pointing out that there is meaning not only in what we say, but how we say it. “By analysing a baby’s cry we can identify more information and offer 360 degrees of communication”, he finds.

“CUIs have a huge potential as they allow people to speak in their own language and get easily access to medical information”, says Anette Ströh, Service Design Lead, Designit, another participant. But she also cautions: “Never underestimate human touch in healthcare, sometimes you need to talk to a person or need human touch”.

Limitations

If you contact a company via a chatbot to solve a problem and end up being frustrated, the system is not well designed

Roman Rittweger

Perception of emotions and adequate responses are key factors of a successful CUI-based solution. “Although CUIs are getting better, sometimes real person needs to intervene as CUIs don’t understand everything”, says Ströh. Language recognition only goes so far.

Conversations with chatbots can become exhausting when the system does not understand or too many interactions are necessary so that the interest in interacting with the chatbot diminishes. ”User experience is an important consideration”, Rittweger adds, “If you contact a company via a chatbot to solve a problem and end up being frustrated, the system is not well designed. Chatbots need to solve a user’s problem and not create more frustration.” This points to the general need for evaluating CUIs with a view to their acceptability and usability, safety and effectiveness.

Recommended article

Interview • New technologies

Robots in medicine: Weak knees and hard facts

Although robotics is now an established arm of medical technology – with the Da Vinci surgical system a trailblazer – many basic issues need to be resolved before nurse Robot can report for the morning shift in a ward. Since centre-forward Robot and nurse Robot are closely related, we spoke with the developer of soccer robots about current progress.

Future of CUIs in healthcare

The panellists at Medica were confident that CUIs will become a primary interface to healthcare, but not necessarily the primary. Speech will be important, thinks Ströh, but it will coexist with other access forms. And panellist Katina Sostmann, Executive Creative Director & Lead Health iX, IBMiX, agrees: “There will be still visual interfaces like buttons, but normal language will be the standard for any kind of human machine interactions. It gets everyone on the same page to access information”, she is convinced.

17.12.2020